The hidden cost of building your strategy on everyone else’s data.

Time is a river (or weaver depending on the model).

In 2025, researchers published what they called the Artificial Hivemind study.¹ They tested over 70 large language models across a range of prompts. When they asked 25 different models to write a metaphor about time, the outputs collapsed into two clusters: “time is a river” and “time is a weaver.”

Entirely different model families produced indistinguishable responses. Raising the temperature didn’t fix it. Min-P sampling didn’t fix it. The creative space had already flattened. We tested this ourselves.. you should too.

An ICLR 2024 study measured this in practice.² When people wrote essays with an RLHF-tuned model (the kind underneath every AI creator tool on the market), there was a statistically significant reduction in diversity across different authors’ writing. The “helpful assistant” tuning was to blame. The specific thing that makes AI tools feel useful is the same thing flattening the output.

And, the convergence is invisible to the person it’s happening to.³

Now layer your favorite creator’s favorite scraping tools on top of that.

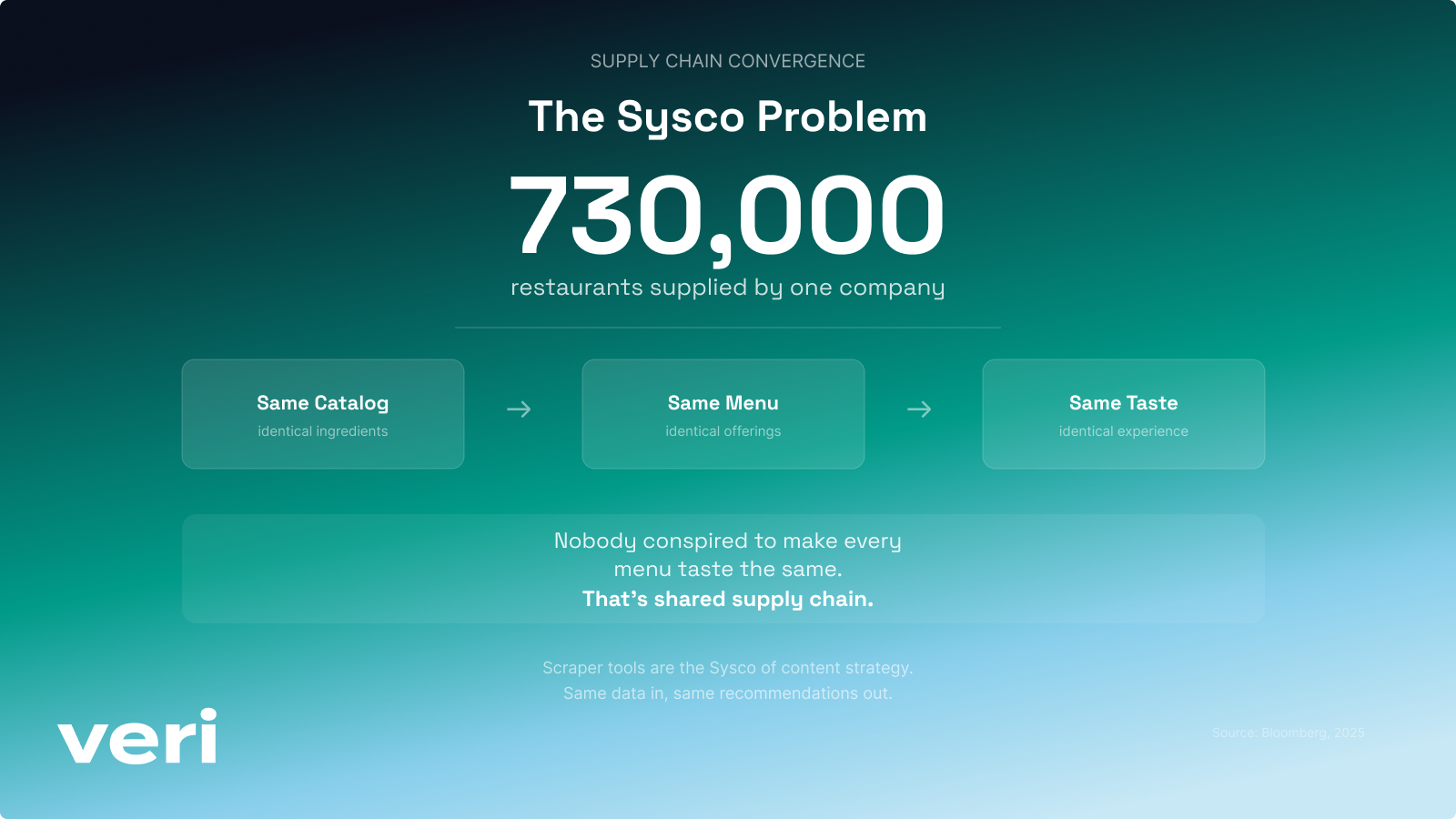

The Sysco problem.

Before AI tools ever touched a creator’s workflow, the American restaurant industry proved the pattern. Sysco, the world’s largest food distributor, supplies ingredients and pre-made dishes to over 730,000 restaurants. Each has a chef hired to build their menu while shopping from the same catalog of frozen appetizers, pre-portioned proteins, and house-brand sauces. Bloomberg documented the result: American restaurant food has converged into a sameness that diners can feel but can’t quite name.⁴

The feedback loop is identical to scraper tools. More restaurants serve Sysco products, consumer taste normalizes around them, Sysco’s catalog decisions get validated, the available selection narrows. Each cycle compresses what “restaurant food” can be. Nobody conspired to make every menu taste the same, that’s just shared supply chain in action.⁵

The convergence effect. Your strategy was someone else’s before it was yours.

Content laundering.

There’s a category of AI tools gaining steam, idea generators, scripting tools, etc. They scan thousands of creator channels, find videos getting 2x to 10x more views than normal (outliers), reverse-engineer why they performed, extract the title structure, psychological hook, and thumbnail concept, and sell that back to you as “your strategy.”

The recipe is the same across all of them.

The pitch is data-driven insights, harmless enough. But take a step back and what you’re actually getting is everyone else’s creative decisions pre-portioned, frozen, and plated with your name on the menu.

These outlier “tools” have thousands of paying users. Within any given content cycle, hundreds of creators are executing the same blueprint. You’re buying “data-driven differentiation” but the math says otherwise.

Same culprit here, it’s the business model: distribution + the incentive to optimize for what already worked + a feedback loop where the homogenized pool becomes next cycle’s training signal. The degenerate feedback loop researchers describe in recommender systems⁶ is the outlier mechanism running at scale.

What the convergence effect actually costs your yield.

Creator yield is the percentage of your creative effort that actually reaches and resonates with your audience. Low yield means you’re paying full production cost on content that was never going to stand out.

Convergence is what kills yield before you even hit publish.

You spend the same hours, energy, effort (maybe less than if you did a year ago) but any productivity gains flatlined before you sent the prompt.

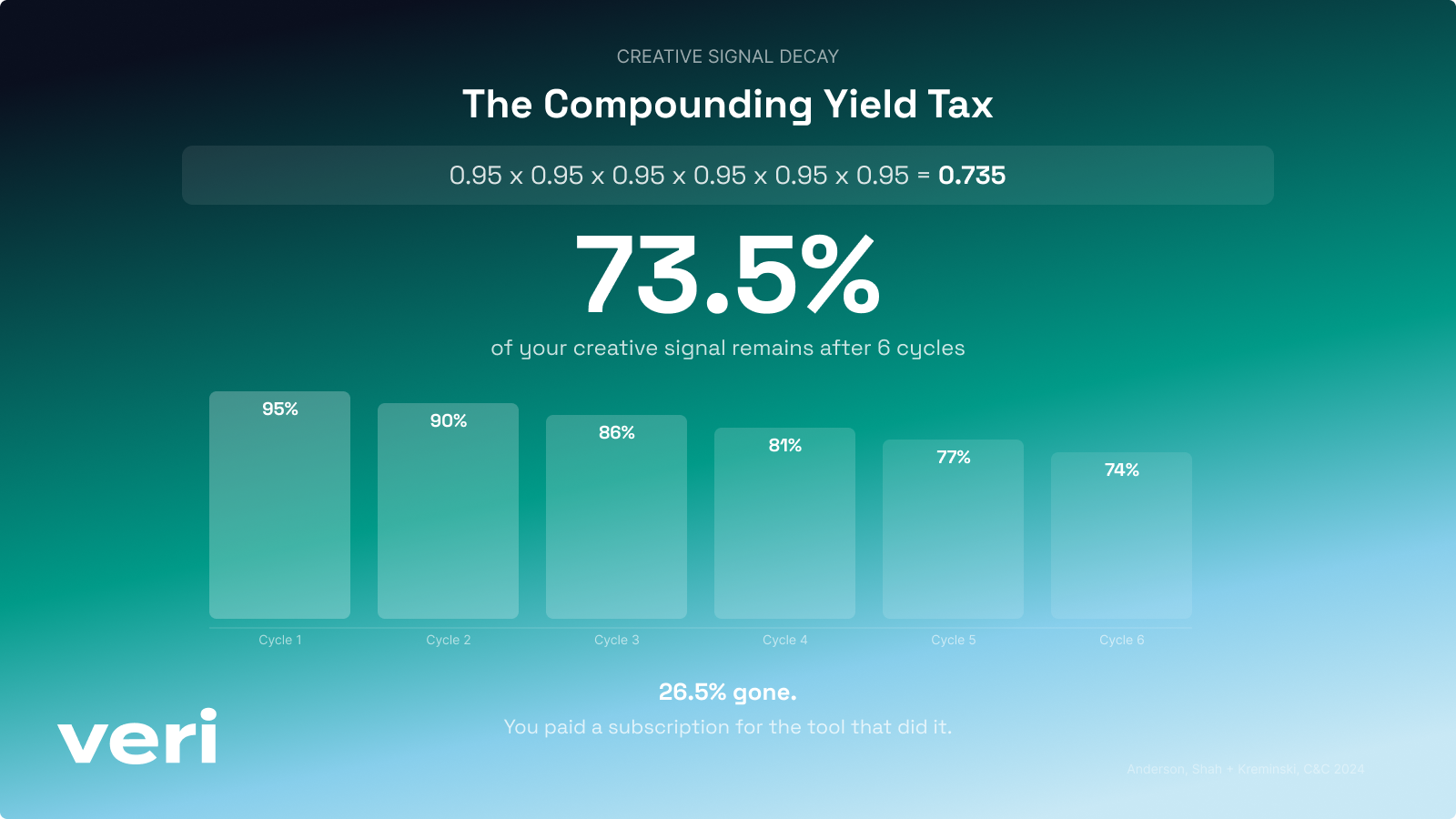

Here’s the math on why it compounds. The C&C study found homogenization effects across every participant.³ Let’s say each cycle only shifts you 5% toward the middle of the bellcurve, small enough to not notice the first or second time even, but it adds up fast across six cycles.

After six content cycles, you’ve retained 73.5% of what made your content yours. A quarter of your creative signal gone. And you paid a subscription fee for the tool that did it. That’s a compounding yield tax.

The creator economy is running this experiment at a massive scale, and nobody is tracking the cost.

You can’t see it from inside.

In 1990, a Stanford graduate student named Elizabeth Newton ran a study that became one of the most cited in cognitive science.¹¹ She had people tap the rhythm of well-known songs on a table while listeners tried to guess the song. The tappers overestimated success by 2000%.

Same as the models, we tried this too. The tapper hears the full melody in their head while they tap. The listener only hears taps.

When you make content, you hear the full melody of your creative intent - your context, the insight that sparked it, how it threads into everything else you’ve made. Your audience may enjoy it, but doesn’t hear the notes in your head.. they hear taps. And when a scraper tool has compressed your intent into the same structural patterns as 50 other viral outlier creators, everyone’s taps sound identical.

Getting content out fast is the game right now, platforms reward it, audiences do too. But, as a creator your competitive advantage is shifting from faster output to the distance you keep between your content and everyone else’s.

AI is compressing that distance one scraper tool at a time.

Every metric a creator can self-monitor looks normal or better. Convergence happens at a level you can’t see from inside your own work.³

The part everyone’s already talking about.

69% of creators are concerned about their content being used to train AI without permission.⁷ YouTube CEO Neal Mohan named AI slop his top priority for 2026⁸ while Google is training AI on its library of 20 billion YouTube videos. Most creators have no idea.⁹ OpenAI transcribed over one million hours of YouTube video through Whisper to feed GPT-4. Reddit made $203 million licensing its users’ posts to AI companies.¹⁰ Your scraping tool trains on your patterns.

The extraction is real, the backlash is rational. But extraction already has a name and a news cycle. The convergence effect doesn’t. And it’s the one actually reshaping what your content looks like before you hit publish.

Signal compounding.

You can’t measure convergence from inside your own workflow. But you can build a system that doesn’t drown in it.

The opposite of convergence is signal compounding. Every piece of content you make should reinforce what makes your content yours.

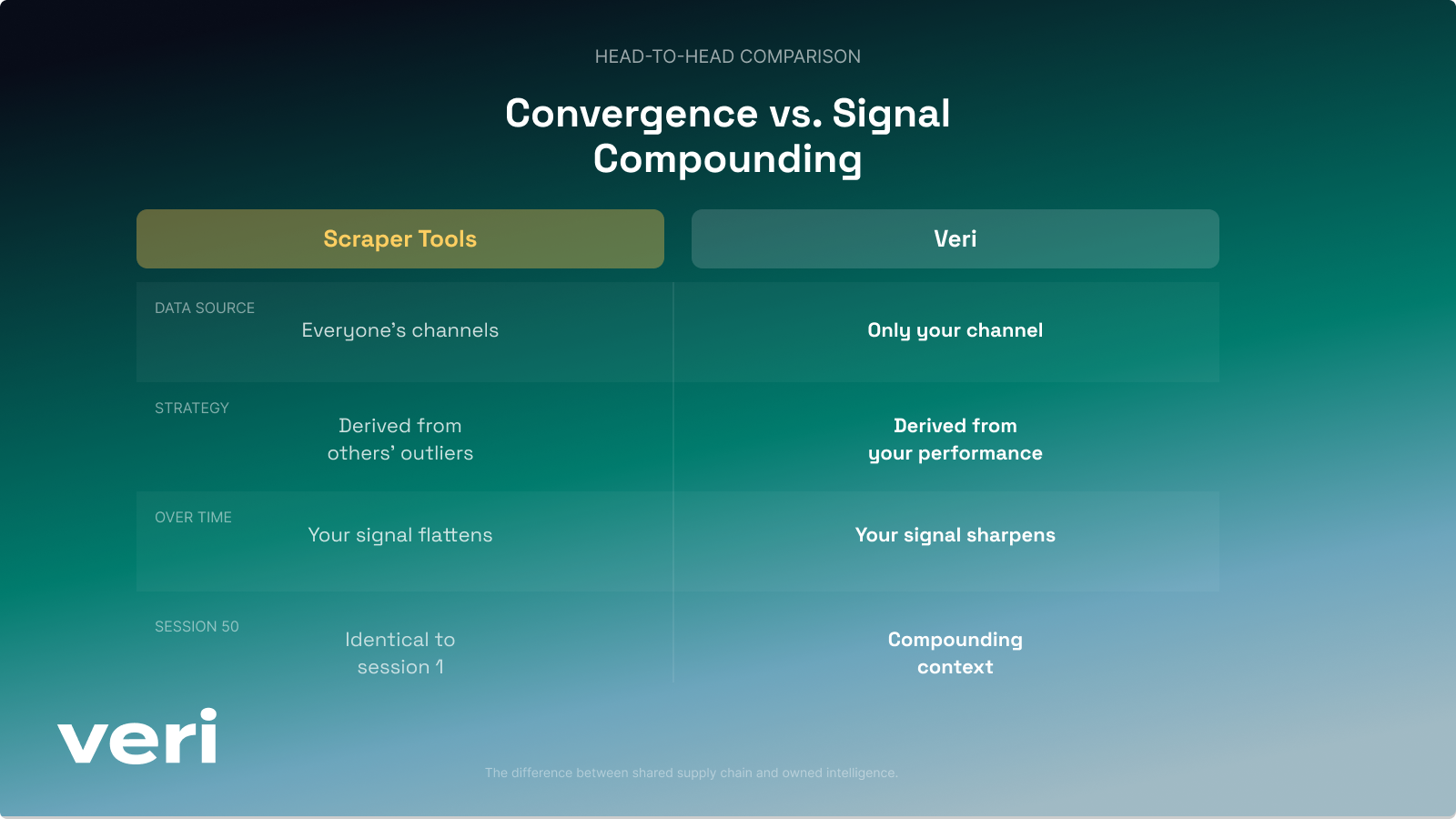

Most AI tools, (some more than others) out-of-the-box, are stateless. Every conversation starts from zero. You paste in your channel data, re-explain your audience, re-describe your content patterns and rebuild context every time.

Veri is stateful. Session 50 is smarter than session 1 because it’s built on the 49 before it.

The only channel we analyze for you is yours. We built a context graph where every interaction, every piece of content you create, every performance signal that comes back, compounds into a knowledge layer that is yours. It’s always-on, ambient architecture that gets better every time you use it.

The yield thesis still holds.

The best creators aren’t faster. They waste less.

The scraper economy makes you waste more by chasing someone else’s signal. Convergence makes you invisible. You pay the cost of production on content that was never going to stand out because it was designed by a tool that optimizes for what already worked for someone else.

Your content trains their models, and their tools make your next video look like everyone else’s. Extraction is the supply side. Convergence is the demand side.

The answer isn’t less AI. It’s AI that actually knows you.

Connect your channel at joinveri.co

Frequently Asked Questions

What is the convergence effect?

When creator tools optimize using data from other creators' channels, every creator using that tool drifts toward the same output. The tool finds what already worked, templatizes it, and sells it back to thousands of users. The result is content that looks, sounds, and performs the same. Your strategy was someone else's before it was yours.

What is content laundering?

Content laundering is when a tool scrapes thousands of creator channels, finds videos getting 2x to 10x more views than normal, reverse-engineers the title structure, psychological hook, and thumbnail concept, and sells that back to you as your strategy. The pitch is data-driven insights. What you're actually getting is everyone else's creative decisions pre-portioned, frozen, and plated with your name on the menu.

What is the compounding yield tax?

Each content cycle that uses scraped data shifts your creative output closer to the average. Research found homogenization effects across every participant. Even at a 5% shift per cycle, after six cycles you've retained only 73.5% of what made your content yours. A quarter of your creative signal gone. You paid a subscription fee for the tool that did it.

How does convergence affect creator yield?

Creator yield is the percentage of your creative effort that actually reaches and resonates with your audience. Convergence kills yield before you hit publish. You spend the same hours and energy, but any productivity gains flatlined before you sent the prompt because the output was designed by a tool that optimizes for what already worked for someone else.

What if I've already been using scraper tools and my content has drifted?

That's actually one of the best reasons to start with Veri. Veri learns from your full content history, not just your most recent videos. So even if your recent output has been shaped by external templates or someone else's strategy, your agents are still building on the patterns that made your channel yours in the first place. The more you put them to work, the more your content re-centers around your voice, your data, and your audience. The drift corrects itself through the work.

How is Veri different from scraper tools?

The only channel Veri analyzes is yours. No scraping other creators' data, no outlier detection, no reverse-engineering someone else's strategy. Veri builds a context graph where every interaction, every piece of content you create, every performance signal that comes back compounds into a knowledge layer that is yours. Session 50 is smarter than session 1 because it's built on the 49 before it.

What is signal compounding?

Signal compounding is the opposite of convergence. Every piece of content you make reinforces what makes your content yours. Most AI tools are stateless. Every conversation starts from zero. You paste in your channel data, re-explain your audience, and rebuild context every time. Veri is stateful. It compounds your creative signal instead of flattening it.